Though i should finish the built LCDs first, i've already begun with 3rd display. I's a 1024x768 pixel TFT from my old iBook. It has a LVDS FPD-Link connection and i have searched the web for info about the panel and FPD-Link. I think i've got enough info to build it.

Major problems:

I need a special transmitter chip, preferably in 5V. These chips are generally hard to find (never used by hobbyists) and 5V is even harder. But i'll get a quote today. :-)

Timing is at the upper end of any hobbyists project: Pixel clock is 65 MHz, may be eventually lowered down to ~62 MHz.

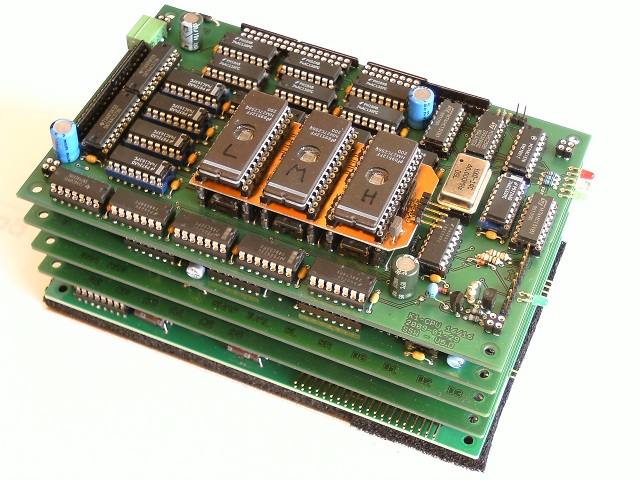

This requires at least 15ns RAM, which will result in very tight timing, or better 12ns. And i need 1.5 MByte of it. Though i have plenty of RAM in stock, i opted to buy three 256Kx16Bit 12ns RAMs. Head count of ICs on the PCB is already very high.

|

| Data flow on the XVGA controller |

|

|

| Control signals |

Project Page

The project page is

.../IO-Boards/VGA/ on my home site. This is on my private computer and everything i do here is directly visible on this page. Currently it contains a collection of spec sheets and the current state of the controller board design.

The Plan

The design ideas are as follows:

• The VRAM is addressed by a 20 bit counter cascade. Due to timing problems, the address is buffered by a set of 74574 latches, so the address is always one clock cycle delayed. The RAM output data is directly fed into the FPD-Link transmitter, which is clocked by the same clock signal. All running on 64MHz with a clock cycle of ~15ns.

• The slow signals, VSYNC, HSYNC and DE (Display Enable) are generated by an ATtiny. It also controls count enable of the address counters, to stop them during HSYNC and VSYNC (or, when DE is false). The ATtiny will be clocked with 16 MHz synchronously with the 64MHz pixel clock.

The ATtiny will also generate the FFB (frame fly back) interrupt signal, which is very important:

• VRAM access from the CPU will be completely asynchronously with the pixel access for the display. It will simply override the signals for the display, resulting in 'snow'. Each access will 'destroy' the display of approx. 3 pixels. This allows me accessing the VRAM without asserting the !WAIT signal on the bus. I have already checked the timing, writing is safe, reading is tight, but should work.

Accessing the VRAM requires sending an address and then one data i/o. The address is 20 bit, so it has to be transferred in two chunks. I opted to split the address in two 10 bit packages, which will directly translate into X and Y pixel address. To reduce the required bus transfers, i designed the address registers as counters as well. They will provide an auto-increment feature, so that i only need to set the start address and then can read or write in burst mode, hopefully with the full bandwidth of the bus of 16Mwords/sec. The X address can auto-increment, the Y address can auto-decrement as well. I probably can't make the X address easily auto-decrement, because i simply have not enough control lines to control this easily. The 'control lines' are the bus's address lines, and it has 6 of them.

To avoid the 'snow' effect when accessing the VRAM, i plan to do most i/o during the vertical frame flyback, which may be up to 10% of the total frame time. The exact maximum number of lines during ffb of my display will be determined when it is all built, therefore it's nice to have it programmable, because it's done by the ATtiny. It will be slightly tricky to align the control signals of the ATtiny with the 4-pixel boundary (ATtiny clock is Pixel clock ÷ 4) because the DE (display enable) signal for the FPD transmitter and the count enable signal for the address counter must not start and stop somewhere in the middle of a 4-pixel package but exactly at the start or end. Else the image on the TFT will be shifted some pixels, missing some at the left side and displaying garbage at the right side.

Let's see how it all works!